Writing a questionnaire for a newsmaker survey is different than designing a customer satisfaction or general market research survey. Such general surveys are often academic, clinical, even boring: in contrast, with a newsmaker survey you are looking for punch and attitude. For instance, in a survey about online privacy, Abine added the response “Walk around naked in locker rooms,” which turned up in the headline of the release: “From Walking Around Naked to Updating Facebook Privacy Settings, Younger Generation’s Views On Privacy Are Changing.” With the end in mind, your team should brainstorm the possible headlines that you would love to see, if the results warrant.

Where academic discipline is important is when it comes to question wording. Many common problems can lead to inaccurate survey results and will reduce your credibility with reporters, including asking leading questions or encouraging acquiescence bias. Good question wording is as much art as science; for instance, one tiny survey (18 words!) reviewed by Researchscape suffered from 5 different problems that would affect results. Once your questionnaire is drafted, make sure a professional market researcher reviews it for errors and rewrites it where necessary before you collect responses.

One Questionnaire, Multiple Releases

A well-designed questionnaire can provide material for two or three news releases. For instance, a monthly Bankrate.com survey provides an update on financial security, using a battery of standard questions, but also includes some topical questions, which are often reported in a separate news release.

On average, a survey news release reports the findings from 5 questions (not including panel demographic questions). Not that you need as many as 5 questions: 15% of 2013 news releases reported on a single question! A 15- to 20-question survey can easily be leveraged to provide content for 3 or 4 news releases.

One way to get more content out of a question is to discuss statistically significant differences by subgroup. For instance, Bankrate.com reported key differences by age, educational background, household income, and even political party, as appropriate. Many online panels have fielded detailed demographic questions to panelists already, enabling you to break out results by age, ethnicity, gender, region, household income, marital status, and employment status, without needing to ask those questions in your own survey.

Bankrate.com illustrates another best practice for leveraging questionnaires: repeating them periodically, which offers trend information and other news angles. Reporters like them too. “I prefer surveys that compare results to earlier periods because they are more than a snapshot in time,” said freelance journalist Michael Fitzgerald, who frequently writes about innovation. “They are also conducted with a little more objectivity: you can say what you want to say with certain questions but now you have to at least be consistent.”

“The challenge with online surveys is they are not as randomized as a well-done telephone survey. But—you can get a wider audience online than you can in a sociology survey with 19 year olds who need 20 bucks.” — Michael Fitzgerald, freelance journalist

One Questionnaire, Many Purposes

Marketing budgets are often tight and must be stretched to accomplish different goals. While most newsmaker surveys are conducted solely for purposes of generating content and publicity, that does not have to be the case.

One Researchscape client was using a newsmaker survey to promote a product launch, but had lingering questions about pricing and messaging. Survey respondents were given a price test and were asked which message resonated the most. Another Researchscape client needed to understand how their installed base differed from the wider market and fielded some questions for internal use. In both cases, these other questions were omitted from the PR campaign and were not reported as part of the results.

Economies of Scale

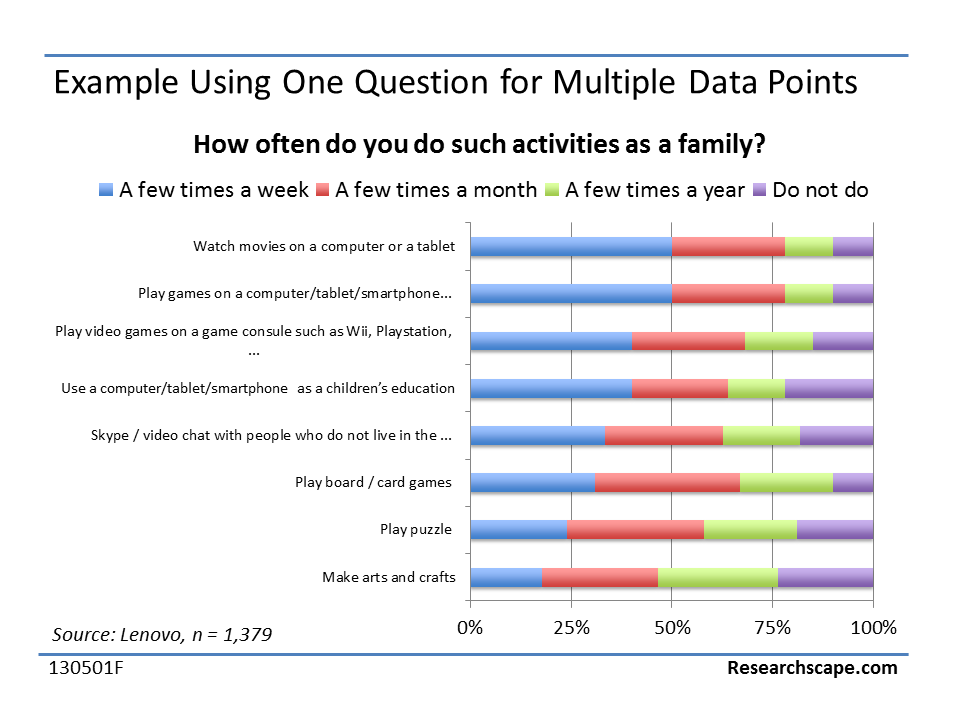

The best economy of scale is repeating the same questions periodically. But it is not the only approach. Different questions pack different amounts of information. A simple yes-no question may be necessary for skip patterns for subsequent questions, but it won’t give you much to report on. A matrix question, where respondents select from a common scale for item after item, goes much further, as in the Lenovo Table PC release, which published the results of just one question.

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now:

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now: