Most survey news releases simply include a summary of key findings of the survey, without accompaniment. Modest additional effort can create news releases more likely to be picked up.

Optimizing Releases for Reporters

“Surveys are great for me for a blog post or for shorter, more compact, newsy things,” said Fitzgerald. “Ideally, I want a survey that has the appearance of independence, but they are usually company surveys. I don’t have the time to go through the questions to see if they are balanced or asking questions the way an independent researcher would ask them. I want transparency, some sense of its statistical value, what the sample size is, whether it is a one-off or something comparing to earlier periods. I’m looking for timeliness, something that supports my beat, that I can use as a standalone item or that opens a question for a feature. I don’t have the time to do too much work—the publicist should highlight the data and put it into context.”

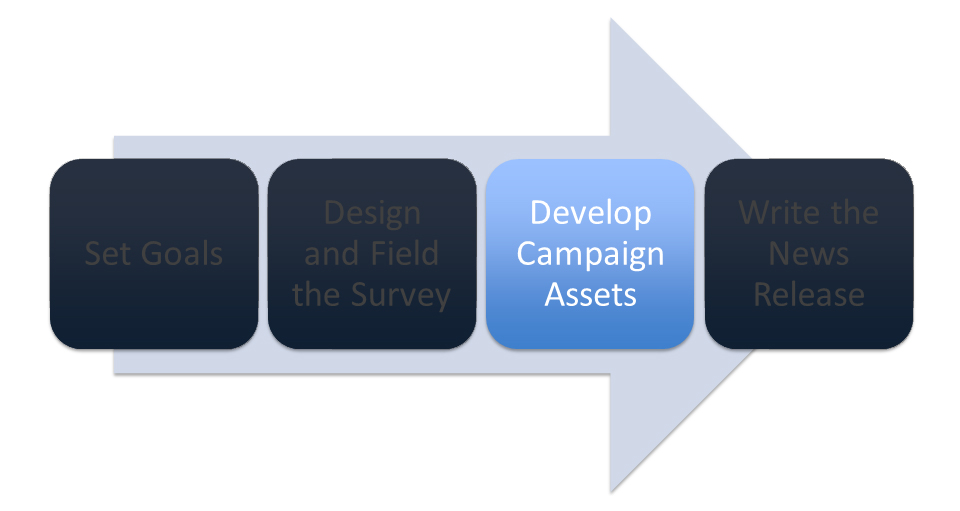

Some assets to help busy reporters:

- Exhibits – Only 8% of 2016 releases included charts and graphs, either as attachments embedded in a multimedia news release or as links from the release (as distinct from “infographics”). Those that did include exhibits often had low quality graphs unlikely to be used by reporters. Charts with simple, professional formatting can easily be embedded into a story; garish, branded charts in unusual color schemes are less likely to be used. Surprisingly, quite a few exhibits fail to include the sponsoring organization’s name.

- Topline Results – Only occasionally offered, these documents include the question wording and the answers selected for each question for the overall sample. Some include demographic breakouts by question; some don’t. “I want to see what the questions are and what order they are asked in. This is a classic source of bias, unintentional or intentional,” said Sherman.

- Methodology FAQ – While most releases include a paragraph about the survey methodology, in the interests of space such statements are often short and don’t answer all the questions reporters are trained to ask. “Too many releases don’t include a methodology section,” said Sherman, “or what they do include you could write on the back of a matchbook and write it around the logo! Think about the questions that a journalist would ask and answer them. If there are weaknesses in the methodology, then just be ready to admit it.” Concurred Fitzgerald: “I like to see in a press release language that is carefully couched that uses shades of gray. I will give a little more credibility to someone who acknowledges in some form that they are not making sweeping claims about their study. Sweeping claims just raise red flags. Pew Research tries to be very careful and point towards what they think the data indicates, saying ‘We think you can apply it more broadly to represent some aspect of society.’”

The American Association of Public Opinion Researchers and the Poynter Institute train reporters to ask the following questions about survey research results.

-

- Who paid for the poll and why was it done?

- Who did the poll?

- How was the poll conducted?

- How many people were interviewed and what’s the margin of sampling error?

- How were those people chosen? (Probability or nonprobability sample? Random sampling? Non-random method?)

- What area or what group were people chosen from? (That is, what was the population being represented?)

- When were the interviews conducted?

- How were the interviews conducted?

- What questions were asked? Were they clearly worded, balanced and unbiased?

- What order were the questions asked in? Could an earlier question influence the answer of a later question that is central to your story or the conclusions drawn?

- Are the results based on the answers of all the people interviewed, or only a subset? If a subset, how many?

- Were the data weighted, and if so, to what?

“What’s the sample size? What was the response rate? What were the questions? What order were they asked in? Are you introducing bias? I am capable of asking any and all of these questions. It depends on the survey.” – Erik Sherman, business and technology journalist

Although less often cited, the National Council of Public Polls (NCPP) has published its own list of questions for journalists to ask about surveys. This list serves as a useful FAQ for reporters on why the questions are important: 20 Questions A Journalist Should Ask About Poll Results.

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now:

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now: