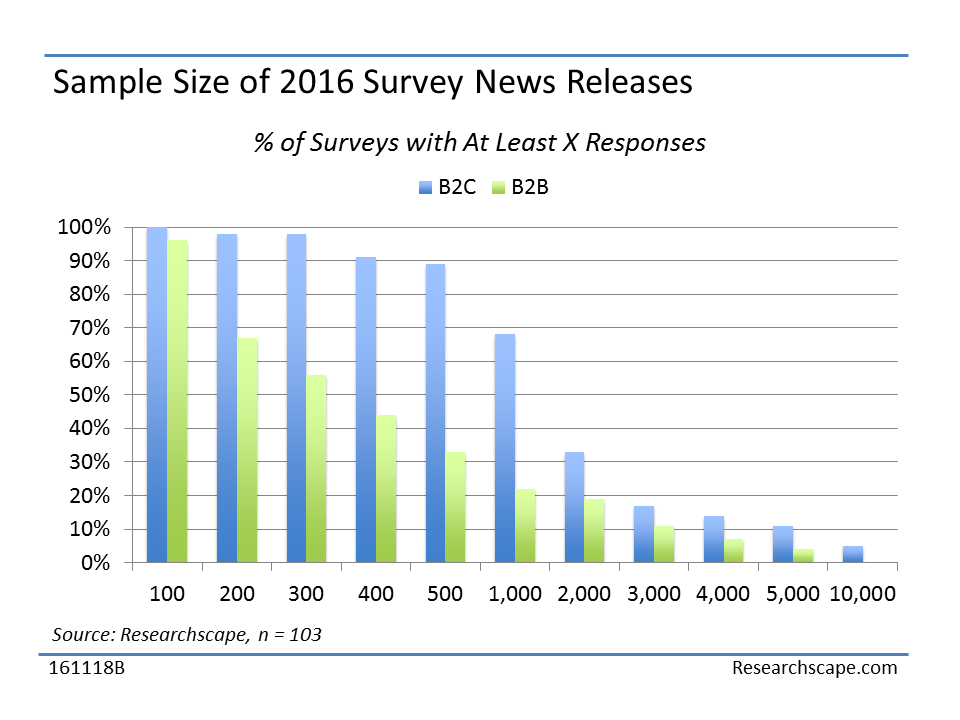

The typical survey reported in the 2016 corpus of news releases has 1,000 respondents (median size), with 73% having 500 or more responses, 55% having 1,000 or more responses, and 35% having 2,000 or more responses. The smallest sample size was 98 responses.

The more responses, the greater the credibility with reporters. For instance, Kate Sullivan, editor with the UK publication Tech Radar, commented on a survey saying, “The research only took in the opinions of 641 people so it’s not exactly something we’re going to submit to the Encyclopædia Britannica.” The good news is she wrote about the survey – the bad news is she questioned its quality. For most reporters, consumer surveys with 1,000 responses cross a threshold that adds to their credibility.

B2B (business-to-business) surveys do typically have much lower sample sizes than surveys of consumers, voters, or employees in general. The median size of a B2B survey is 377 respondents vs. 1,032 respondents for a B2C survey.

Large response sizes are often necessary when behaviors to be observed are small: for instance, out of 2,037 U.S. adults surveyed on behalf of the Network Branded Prepaid Card Association (NBPCA), just 91 respondents (6%) used prepaid debit cards for everyday transactions. Another reason for large sample sizes is for meaningful comparisons between subgroups. If you want to compare users of 5 different products, you want to make sure you have sufficient users of each product to make comparisons. Or if you want to report tailored results by state, as a number of major brands have done with their surveys, you want to make sure that you have hundreds of responses in each state that you are reporting on. For instance, Bridgestone surveyed 200+ respondents in each of 20 major metropolitan areas (for a total sample of 4,044), leading to tailored coverage contrasting each city’s rate of recycling to the overall average.

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now:

This is an excerpt from the free Researchscape white paper, “Amp Up News Releases with Newsmaker Surveys”;. Download your own copy now: